What will limit AI first: algorithms… or electricity? ⚡

A conversation with the engineers designing the power and cooling behind AI

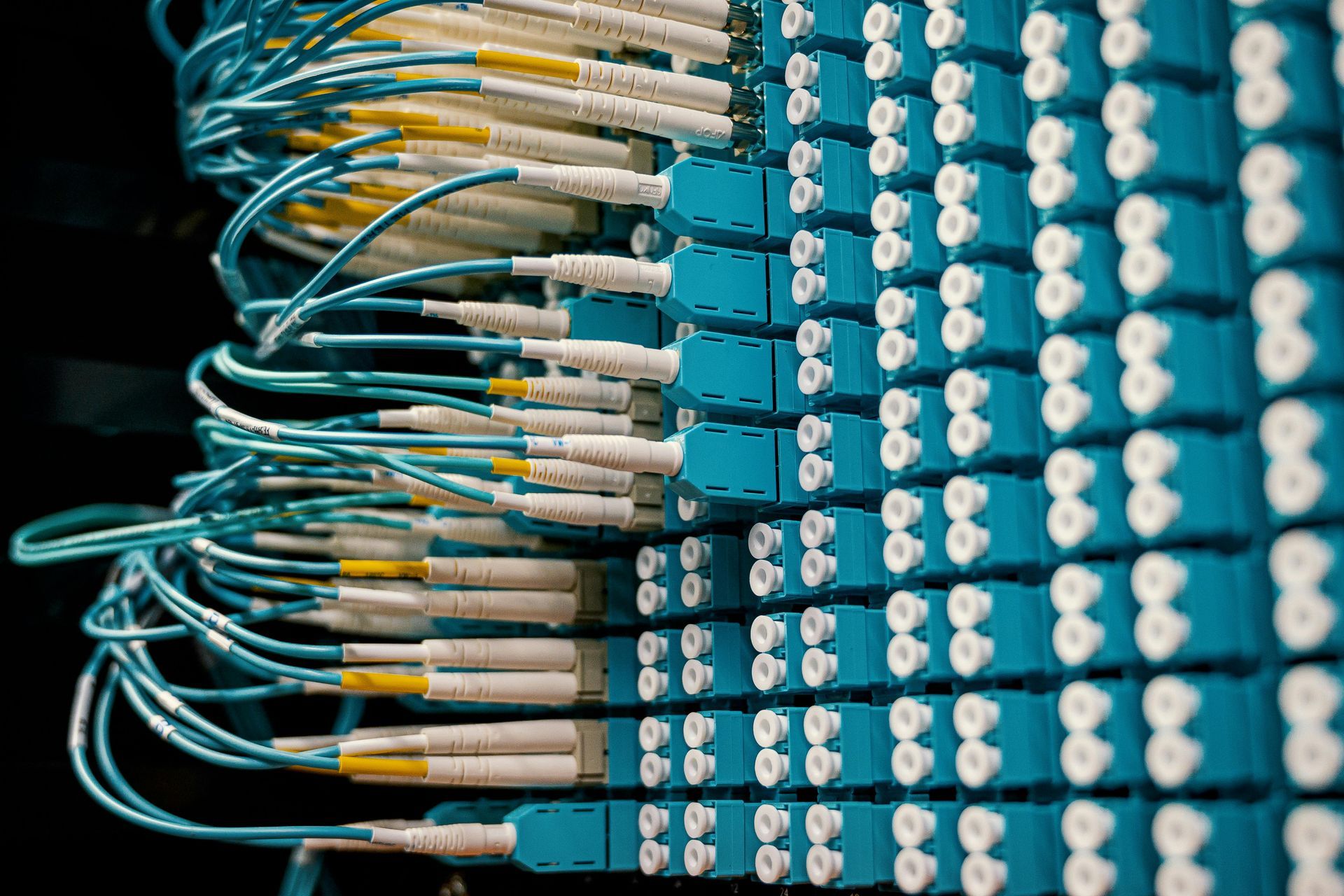

Artificial intelligence may appear intangible — algorithms running somewhere “in the cloud”. But in reality, AI depends on some of the most energy intensive infrastructure ever built. Behind every AI model sits a massive system of electrical equipment, cooling plants, pumps, generators, and mechanical infrastructure working continuously to keep servers operating within precise thermal limits.

At Green Impact Advisory, understanding and designing this infrastructure is part of our work at the intersection of energy systems, sustainability, and advanced engineering. Two of the technical authorities involved in this work are Vlad Weber, Technical Director, and Silviu Ulmeanu, MEP Director. With extensive experience in mechanical and electrical systems for complex buildings and infrastructure projects, they design the systems that ensure data centers operate safely, efficiently, and continuously.

In a recent discussion with them, we explored how the rapid expansion of artificial intelligence is transforming the way data centers are engineered.

From Cloud Storage to AI Infrastructure

The engineering team previously worked on the design of a cloud storage data center, delivering the full building services package:

- HVAC systems

- electrical infrastructure

- low-voltage and very-low-voltage systems

- fire protection systems

- plumbing and rainwater systems

- technical assistance during construction

These facilities already operate at very high reliability standards. Servers run continuously and produce significant heat that must be removed through carefully designed cooling systems. However, according to the engineers, AI data centers represent a completely different scale of infrastructure.

A Different Order of Magnitude

Traditional data centers already consume significant amounts of electricity. AI infrastructure pushes this demand to a much higher level.

In the feasibility study analyzed by the engineering team, electrical power requirements for AI computing are almost thirty times higher than those of conventional cloud facilities.

For example, a facility with four such halls can reach 40–50 MW of installed electrical power. At that scale, the project begins to resemble a small power plant integrated with computing infrastructure. To support these loads, engineers often need to design dedicated high-voltage substations, transforming grid electricity from 110 kV transmission levels to 20 kV distribution systems within the site.

To guarantee uninterrupted operation, AI data centers also rely on large modular UPS systems capable of delivering megawatts of stable power, ensuring that computing operations continue even during power disturbances.

The Real Challenge: Heat

Almost all electrical energy consumed by servers ultimately becomes heat.

Removing this heat efficiently is one of the most important engineering challenges in modern digital infrastructure.

Cooling the Machines That Train AI

To manage this thermal load, the proposed cooling concept combines several technologies:

- water-cooled chillers

- closed-loop cooling towers

- seasonal free-cooling operation

- chilled water supply at approximately 20°C

The system is designed with 6+1 redundancy, ensuring that cooling capacity remains available even if one major component is offline. One of the key design strategies is to take advantage of local climate conditions. During winter and cooler months, the chillers can be partially or completely shut down, while cooling towers dissipate heat directly using outside air. This free-cooling approach significantly reduces energy consumption.

Energy Efficiency: Measuring Performance with PUE

Data center efficiency is commonly measured using Power Usage Effectiveness (PUE), which compares total facility energy consumption with the energy used by IT equipment.

For the analyzed configuration, a calculated PUE of approximately 1.16 resulted, potentially rising slightly to around 1.19 under real operating conditions.

For comparison:

- older data centers often operated at PUE values above 2.0

- modern hyperscale facilities typically achieve 1.2–1.4

These improvements translate into significant energy savings at large scale.

The Next Frontier: Immersion Cooling

As AI workloads continue to grow and rack densities increase, traditional cooling approaches may eventually reach their limits.

One of the technologies attracting increasing attention is immersion cooling, where servers are submerged in dielectric fluids that remove heat directly from the hardware.

This approach enables much higher computing densities while significantly reducing cooling energy consumption. Some immersion cooling systems can achieve PUE values below 1.05, pushing the efficiency of digital infrastructure even further.

The Sustainability Challenge

Large AI data centers inevitably raise questions about sustainability.

For the analyzed facility, annual electricity consumption corresponds to roughly 46,700 tonnes of CO₂ emissions per year based on Romania’s electricity mix.

Water consumption is another important factor in cooling design. By using hybrid cooling towers capable of operating in dry mode during winter, the system reduces water consumption while maintaining cooling performance. The estimated Water Usage Effectiveness (WUE) is approximately 0.63 liters per kWh of IT energy.

These considerations illustrate why energy efficiency and resource management are becoming central to data center design.

Engineering the Infrastructure Behind AI

Artificial intelligence is often perceived as a purely digital phenomenon. In reality, it relies on complex physical infrastructure that must operate continuously and reliably.

Behind the algorithms are buildings filled with:

- electrical distribution systems

- chillers and cooling towers

- pumps and heat exchangers

- backup generators and UPS systems

- advanced monitoring and control platforms

Designing these systems requires a combination of electrical engineering, thermodynamics, mechanical design, and sustainability expertise.

As AI continues to expand globally, the challenge for engineers will be to ensure that the infrastructure powering this transformation is not only powerful, but also efficient and responsible.

At Green Impact Advisory, this intersection between digital infrastructure and sustainable engineering is where we believe the future of energy systems is being shaped.